A volumetric video or hologram is the result of a process that captures a real 3D space or performance that then can be viewed from any angle in AR or VR, generating very realistic, immersive and interactive 3D content. Volumetric video holograms combine the visual quality of video with the immersion that can only be achieved through spatialized content, and many industries such as sports, fashion, media and entertainment are already using this technology to enhance their offerings and increase their customers’ engagement.

Holograms can be created in various ways, using different technologies such as RGB-D/laser sensors, photogrammetry, LiDAR, and stereoscopic/multiview camera arrays. For creatives, the technologies are not as important as the results and creative possibilities that they can offer, so we divided the available volumetric capture options into three categories: high-end capture, sensor-based capture, and mobile device capture.

High-End Volumetric Video Capture

High-end volumetric video captures are done in professional recording studios, with systems that include various cameras positioned around the subjects, that capture the performance from every possible angle and convert these shots into holograms. Some studios, like Canon’s Volumetric Studio Kawasaki or Microsoft’s Mixed Reality Capture Studios, work with more than 100 cameras to capture the holograms. The results are incredibly realistic and the best out there.

Pros

These studios create volumetric video with outstanding quality and they can offer good support for their clients.

Cons

Due to the used technology and operation costs involved in shooting in a studio, clients should expect to spend a considerable amount of money in the capture phase. The necessity to physically go to a studio can also be an issue due to COVID-19 and travel restrictions.

Examples of high-quality volumetric capture studios:

- Metastage (Los Angeles)

- 4DR (Eindhoven)

- Crescent (Tokyo)

- Dimension Studio (London)

Sensor-based Volumetric Video Capture

A more accessible way of capturing volumetric video is by using depth-sensor cameras, like Azure Kinects. These devices have depth and motion sensing technology at their core, which project a known pattern onto the space and capture back the distortion caused by objects in the scene, which then can be computed into a volumetric video capture. Artists, creatives and independent directors became active in the volumetric video sphere thanks to the affordability of these devices. The quality of such captures is less realistic, but creatives use the visual characteristics of these holograms as a form of language as well.

Pros

Depth-sensing cameras are affordable and there is a very supportive community around this form of volumetric video capture. Today, it’s possible to capture a full 3D scan of a performance with cameras positioned in different angles.

Cons

Holograms that were captured solely with depth-sensing cameras are not as true-to-life as captures made in high-end studios. Yet, artists and creatives have been able to go around this, by using the visual characteristics of this kind of hologram as an asset in their creations.

Examples of software for depth-sensing volumetric capture:

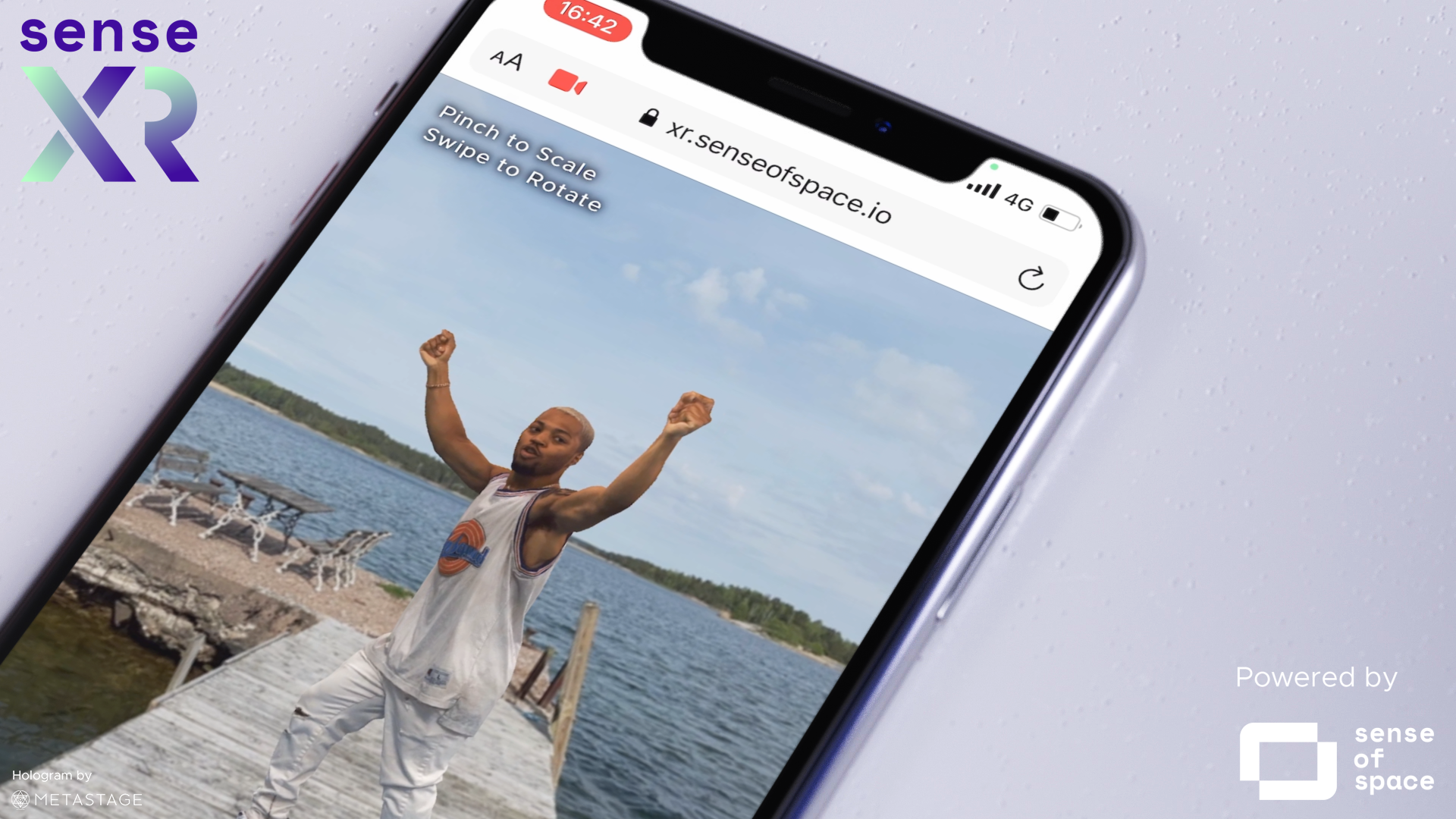

Mobile Device Capture

The newest form of volumetric capture in the market is the one done solely with mobile phones. When iPhone X was released, the built-in TrueDepth camera was utilized by developers as a mobile 3D scanner, opening space for what is today a form of volumetric video capture enhanced with a LiDAR sensor in newest iPhones. There are a few apps out there that allow any user to capture a hologram with their phones – no previous knowledge necessary. This opens the doors of 3D communication, as people are now able to record themselves in 3D and share their holograms with others in AR. Mobile device capture is ideal for situations where social distancing is key, or for use cases in which the created content should be more personal, with a “home-made” feeling.

Pros

The most accessible form of volumetric capture out there – your iPhone and an app are enough to make it happen, with no need to go to a studio. This technology also unlocks user-generated content for AR and 3D communication.

Cons

Because the capture is done with a single camera, it can only generate “one-sided” holograms or the back view is generated by machine-learning which doesn’t look as good as reality (yet), instead of the fully realistic 3D scans that are possible in high-end studios or with depth-sensing cameras.

Examples of volumetric capture apps: